Human-driven AI research that grows people and organizations, not replaces them.

For the public

See how human-driven AI research shapes everyday experiences with technology.

Learn moreFor industry partners

Partner with CHARM on human-centered AI tools, decision-support, and evaluation.

Partner with usAbout CHARM

The Center for Human-Driven AI Research and Methods

The Center for Human-Driven AI Research and Methods (CHARM) is a multi-faculty center at the Harvard John A. Paulson School of Engineering and Applied Sciences. We focus on human–AI interaction design and computational methods that leaves people stronger, not just faster in the moment.

Why CHARM now?

AI is often framed as a race for speed: replace human labor with computation, produce more, finish faster. Yet this approach of optimizing for the immediate term risks losing out in the longer run.

At CHARM, we recognize that a single task is never the whole story. A great summary for a negotiator is just one step in their negotiation journey. A great data analysis for a patient is just one step in their health journey. A great writing support tool for a student is just one step of their learning journey. Each task is a moment in a longer human arc—learning, judgment, health, negotiation, creativity.

In addition to asking “Did the tool complete this step?” we also ask “Did the person leave stronger after using it?” or “Is the person better able to fulfill their needs and values?”

Our Mission & Strategy

Mission: To create AI tools that put people first: AI that expands human capability, leaving people better prepared for the next challenge.

Strategy: We make scientific contributions in human-computer interaction, visual computing, and machine learning to design tools that augment human capabilities and unlock what people and organizations can achieve. We move beyond proofs-of-concept to effective products by collaborating with institutions from around the world.

Who are we?

Faculty leading CHARM

CHARM brings together faculty from across Harvard SEAS, united by a focus on human-driven AI methods and interaction design.

Finale Doshi-Velez

Herchel Smith Professor of Computer Science

Reinforcement learning, AI in medicine, decision-making

Krzysztof Gajos

Gordon McKay professor of Computer Science

Human-computer interaction, accessible computing, intelligent interactive systems

Elena Glassman

Assistant Professor of Computer Science

AI-resilient interfaces, AI safety, human-computer interaction

Hanspeter Pfister

An Wang Professor of Computer Science

Visualization, computer graphics, computer vision

Announcements

Latest from CHARM

Hongjin Lin is giving a talk with AI & Equality!

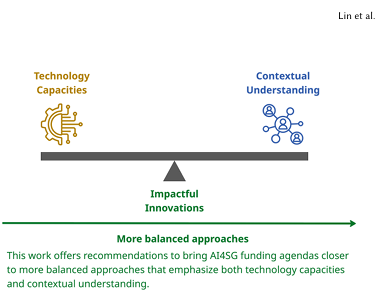

CHARM Member Hongjin Lin is giving a talk for AI & Equality on her paper “Funding AI for Good: A Call for Meaningful Engagement”.

You can see all of the details of her talk on the AI & Equality website!

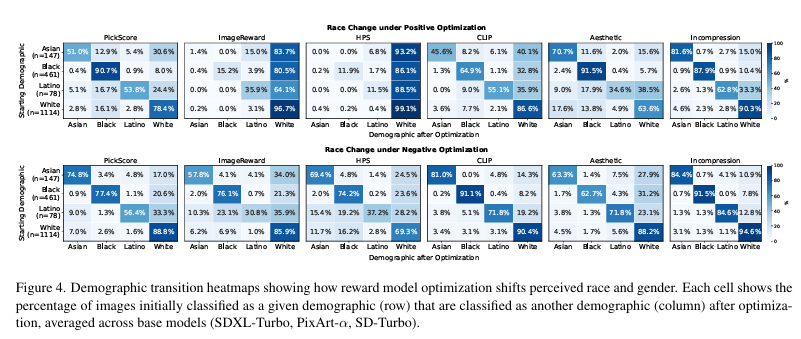

Grace Guo accepted into IEEE CVPR

CHARM member Grace Guo has had a paper she worked on “Bias at the End of the Score” accepted at IEEE CVPR 2026! Congratulations, Grace!

Read the full paper

Barbara Grosz visits CHARM

We had the honor of hosting Barbara Grosz, Higgins Research Professor of Natural Sciences Emerita at CHARM on April 23rd, where CHARM members had the chance to discuss AI and its impact on society, plus how we can move forward using AI to aid us, while we as humans are still the decision-makers.

Student Spotlight

Ziwei Gu on AI-Resiliency · May, 2026

As generative AI produces information—code, essays, analysis, videos—at an unprecedented scale and speed, we face a critical bottleneck: the human capacity to verify alignment and make sense of it all. To address this, Ziwei is moving the paradigm from “AI that generates” toward “AI that co-creates.” A key theme of his research is AI-resiliency, the design of interfaces that ensure potential AI errors or contextually inappropriate decisions are easily noticed, judged, and recovered from.

This vision has taken shape in his recent publications. In his CHI 2024 work, Ziwei proposes GP-TSM, a novel LLM-powered text rendering technique that improves reading efficiency while minimizing the risks of AI-generated summaries. He further expanded this to large-scale document analysis with AbstractExplorer (UIST 2025), which leverages LLMs and human cognitive theories to help users navigate patterns across massive text corpora without losing the context of individual documents.

Currently, Ziwei is rethinking the fundamental interfaces of human-AI interaction. He is developing generative controls to allow users to intuitively audit and refine AI outputs. He is also extending the AI-resiliency framework to autonomous agents. By designing mechanisms to help users anticipate the consequences of an agent’s actions before they are finalized, he aims to empower users to remain in control in increasingly complex, agent-driven workflows.

Recent Publications

Stay in touch with CHARM

Join our mailing list to hear about new research, events, and opportunities to engage with the Center for Human‑Driven AI Research and Methods.